© 2026 NervNow™. All rights reserved.

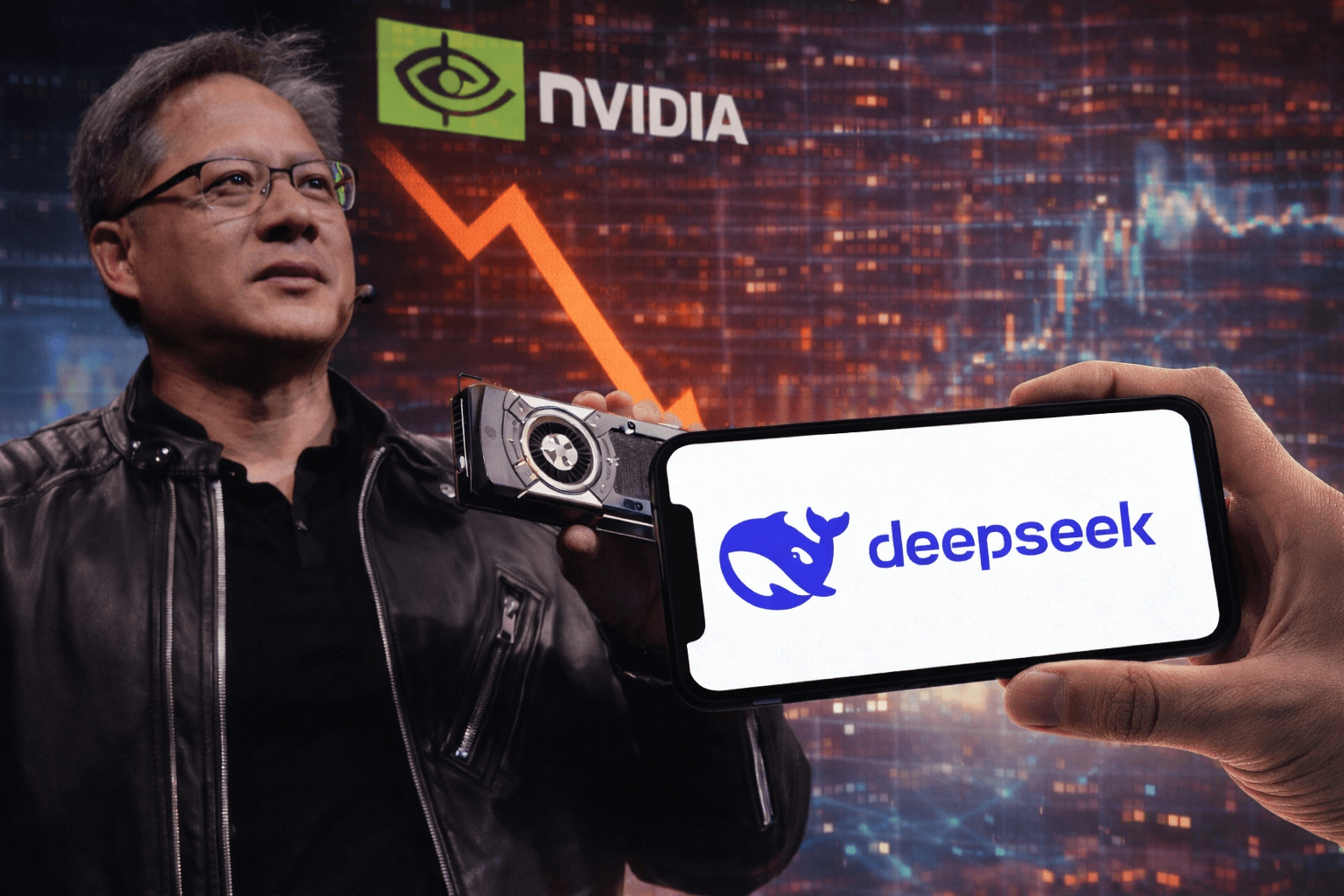

What’s Up With DeepSeek? The AI Release That Crashed Nvidia

When a little-known company in Hangzhou released an open-source AI model on January 20, 2025, it triggered the largest single-day stock market loss in history and forced the entire artificial intelligence industry to reconsider its fundamental assumptions about cost, scale, and competitive advantage.

When a little-known company in Hangzhou released an open-source AI model on January 20, 2025, it triggered the largest single-day stock market loss in history and forced the entire artificial intelligence industry to reconsider its fundamental assumptions about cost, scale, and competitive advantage.

On January 27, 2025 Nvidia experienced the most catastrophic single-day loss in stock market history. The chip manufacturer’s shares plummeted 17 percent, erasing $589 billion from its market capitalization in a matter of hours. The decline eclipsed the company’s own previous record set just months earlier, when a September 2024 drop had wiped out $279 billion. Broadcom fell more than 17 percent. Micron dropped nearly 12 percent. Advanced Micro Devices shed over 6 percent (Yahoo Finance, 2025). The technology-heavy Nasdaq composite index plunged 3.1 percent while the broader S&P 500 declined 1.5 percent.

The catalyst for this unprecedented market upheaval was not a recession forecast, a geopolitical crisis, or a regulatory action. It was a research paper and an open-source software release from DeepSeek, a Chinese artificial intelligence startup that most investors had never heard of one week earlier.

The Model That Broke the Assumptions

DeepSeek released its R1 reasoning model on January 20, 2025, accompanied by a technical paper that detailed how the model was developed. The paper made claims that contradicted everything the AI industry believed about what was required to build frontier AI capabilities. According to DeepSeek’s documentation, the R1 model achieved performance comparable to OpenAI’s o1 model across mathematics, coding, and reasoning benchmarks while costing only $5.576 million to train, based on the rental price of Nvidia graphics processing units.

To place this figure in context, OpenAI CEO Sam Altman had previously indicated that foundation model training cost upwards of $100 million, a figure he later suggested was actually higher during a 2024 MIT event (IT Pro, 2025). The contrast was stark enough to fundamentally challenge investor confidence in the economic models underpinning hundreds of billions of dollars in AI infrastructure investment.

The performance benchmarks reported for DeepSeek R1 were equally striking. On the American Invitational Mathematics Examination, a competition that tests the abilities of the strongest high school mathematics students in the United States, R1 achieved approximately 79.8 percent accuracy using the pass@1 metric, according to Fireworks AI. On the MATH-500 dataset, a standard benchmark for mathematical reasoning, the model scored 97.3 percent (Fireworks AI, 2025). In competitive programming challenges similar to those on Codeforces, R1 achieved an Elo rating of 2,029, outperforming 96.3 percent of human programmers.

These results matched or exceeded the performance of proprietary models from OpenAI, Google, and Anthropic on specific benchmarks, particularly those involving mathematical reasoning and code generation. The implication was that a relatively unknown Chinese startup, working under U.S. export restrictions that limited access to the most advanced AI chips, had achieved results comparable to the best-funded American laboratories.

The Cost Controversy: What $5.6 Million Actually Means

The $5.576 million training cost figure became the single most discussed and contested datapoint in technology in late January 2025. DeepSeek’s technical paper included an explicit caveat that this number represented only the official training run and excluded prior research and ablation experiments on architectures, algorithms, or data. This qualification did not prevent the figure from becoming the headline number in nearly every media account of the release.

Skepticism about the true development cost emerged almost immediately. During an interview with The Recursive, Martin Vechev, Director of INSAIT (Institute for Computer Science, Artificial Intelligence, and Technology), noted that the $5.6 million figure was based on using 2,048 H800 cards. However, developing a model of R1’s sophistication requires running training multiple times with variations to test different architectural choices, data mixtures, and hyperparameter settings. The hardware alone, 2,048 H800 chips, would cost between $50 million and $100 million to purchase outright.

A detailed analysis from SemiAnalysis went further, estimating that DeepSeek’s total server capital expenditure amounted to approximately $1.3 billion. The report indicated that DeepSeek has access to roughly 50,000 Hopper-generation GPUs, a scale of infrastructure that cannot be built for millions of dollars. Another estimate suggested total hardware costs of $1.6 billion and operating expenses of up to $944 million.

DeepSeek’s founder, Liang Wenfeng, funds the company through his quantitative hedge fund High-Flyer, where he has accumulated approximately $8 billion in assets. The company recruits exclusively from elite Chinese universities including Peking University and Zhejiang University, with AI researchers earning compensation packages reaching $1.3 million annually. These details suggest an organization with substantially more resources than the $5.6 million headline figure would imply.

The more accurate interpretation is that the $5.6 million represents the marginal cost of the final training run, not the total research and development investment required to reach the point where that training run could succeed. However, even this qualified interpretation remains significant. If the final training run that produced a frontier-quality model cost only $5.6 million, it suggests that the incremental cost of iterating on model improvements may be dramatically lower than the industry had assumed.

The Technical Innovation: Reinforcement Learning Without Supervision

The methodological innovation that enabled DeepSeek’s efficiency was the company’s approach to reinforcement learning. Traditional development of reasoning models typically involves supervised fine-tuning on carefully curated datasets of high-quality reasoning examples, followed by reinforcement learning to refine behavior. This process requires substantial investment in data labeling and curation by domain experts.

DeepSeek first developed DeepSeek-R1-Zero by applying reinforcement learning directly to its base model, DeepSeek-V3, without any supervised fine-tuning as a preliminary step. The model was presented with reasoning tasks and received feedback through a reward system based primarily on two factors: accuracy rewards that evaluated whether outputs were correct, and format rewards that encouraged the model to structure reasoning within designated tags.

Through this process, DeepSeek-R1-Zero autonomously developed sophisticated reasoning behaviors including chain-of-thought reasoning, self-verification, reflection on potential errors, and strategy adaptation. These capabilities emerged through the reinforcement learning process itself rather than through explicit instruction or human-labeled examples. The result was a model that could solve complex problems through extended deliberation but suffered from significant practical limitations including poor readability, language mixing between English and Chinese, and occasional endless repetition.

To address these issues, DeepSeek developed its production R1 model using a multi-stage training pipeline. The process incorporated a small cold-start dataset of high-quality reasoning examples to guide initial behavior, followed by two reinforcement learning stages aimed at discovering improved reasoning patterns and aligning with human preferences. This hybrid approach demonstrated that advanced reasoning capabilities could be developed without the massive supervised fine-tuning datasets that companies like OpenAI were believed to depend on.

DeepSeek also introduced Group Relative Policy Optimization, an algorithmic innovation that estimates a baseline by averaging scores across a group of outputs rather than requiring a separate critic model to provide feedback during training. This approach effectively halves the compute resources needed for the reinforcement learning process compared to traditional methods.

Open Source Under MIT License: The Strategic Choice

DeepSeek released R1 under the MIT License, one of the most permissive open-source licenses available (GitHub DeepSeek-R1). The license explicitly permits commercial use, modifications, derivative works, and distillation for training other large language models. The only requirements are maintaining the copyright notice and including the license text with any distribution.

This licensing decision fundamentally differentiated DeepSeek from other frontier AI developers. OpenAI had transitioned from open source to increasingly restrictive access models. Meta’s Llama models, while often described as open source, operate under custom licenses that impose restrictions on commercial use for services with more than 700 million monthly active users. DeepSeek’s choice to use a standard, well-understood permissive license removed legal uncertainty and maximized adoption potential.

Alongside the main R1 model, DeepSeek released six distilled versions ranging from 1.5 billion to 70 billion parameters, built on top of Meta’s Llama and Alibaba’s Qwen base architectures. The distillation process transfers reasoning capabilities from the large R1 model into smaller architectures that can run on more modest hardware. DeepSeek-R1-Distill-Qwen-32B was reported to outperform OpenAI’s o1-mini across various benchmarks, achieving new state-of-the-art results for dense models of that size. Even the 1.5 billion parameter distilled model demonstrated capabilities that surpassed much larger general-purpose models on specific mathematical reasoning tasks.

The open-source release meant that any organization with sufficient technical capability could download the model weights, deploy them on their own infrastructure, and adapt them to specific use cases without ongoing API costs or dependency on DeepSeek’s continued service availability. For regulated industries like healthcare, finance, and defense, where data sovereignty and auditability requirements often preclude use of cloud-based AI services, this represented a qualitative change in what capabilities were accessible.

Market Reaction: The Largest Loss in History

The market reaction to DeepSeek R1 reflected investor uncertainty about fundamental assumptions underpinning AI infrastructure valuations. Nvidia’s $589 billion single-day loss represented more market value than the total capitalization of all but 13 companies in the United States (CNN Business, 2025). The company’s stock price fell to $118.58 per share, bringing its market capitalization to $2.90 trillion (The National, 2025).

The selling extended across the semiconductor sector. Taiwan Semiconductor Manufacturing Company, which manufactures chips for Nvidia and others, experienced significant declines. ASML Holding, the Dutch company that produces the extreme ultraviolet lithography machines essential for manufacturing advanced chips, saw its stock price drop substantially (IG International, 2025). The ripple effects reached cloud infrastructure providers and enterprise software companies that had integrated AI capabilities as core value propositions.

The panic reflected a specific fear: if DeepSeek could achieve frontier performance using older H800 chips that were already subject to U.S. export restrictions, and if the training costs were indeed a small fraction of what American companies were spending, then the entire economic logic supporting massive capital expenditures on AI infrastructure might be flawed. Meta had announced plans to spend upward of $65 billion on AI development in 2025 alone (CNN Business, 2025). Microsoft, Google, Amazon, and others had committed to similar massive infrastructure buildouts.

Investor concern centered on two possibilities. First, that demand for Nvidia’s most advanced GPUs might collapse if comparable results could be achieved with less expensive hardware and more efficient algorithms. Second, that the margins AI service providers expected to earn from their infrastructure investments might not materialize if competitors could deliver similar capabilities at dramatically lower costs.

Bernstein analysts characterized the range of interpretations they observed in the market, from “that’s really interesting” to speculation about “the death-knell of the AI infrastructure complex as we know it” (CNBC, 2025). The firm’s own assessment was more measured, noting that while DeepSeek’s models appeared impressive, calling them “miracles” or assuming they invalidated all existing AI infrastructure investment was overblown (CNBC, 2025).

Industry Response: From Dismissal to Acknowledgment

Initial responses from the AI industry fell along predictable lines. Some voices dismissed the DeepSeek results as either exaggerated or dependent on undisclosed costs. OpenAI accused DeepSeek of “distilling” its models, suggesting that DeepSeek had used OpenAI’s API outputs to train R1, essentially copying capabilities rather than developing them independently. The accusation, if substantiated, would undermine claims that DeepSeek had achieved its results through novel methodology rather than derivative work.

Other responses were more conciliatory. OpenAI CEO Sam Altman wrote on social media that “deepseek’s r1 is an impressive model, particularly around what they Are able to deliver for the price” and added “we will obviously deliver much better models and also it’s legit invigorating to have a new competitor”. Marc Andreessen, the venture capitalist and technology investor, called DeepSeek R1 “one of the most amazing and impressive breakthroughs I have ever seen” and described it as “a profound gift to the world” due to its open-source nature.

David Sacks, the venture capitalist whom President Trump appointed to oversee AI and cryptocurrency policy, said that DeepSeek’s application “shows that the AI race will be very competitive”. The statement implicitly acknowledged that the assumption of sustained American dominance in AI development might require reevaluation.

An Nvidia spokesperson issued a careful statement calling DeepSeek “an excellent AI advancement and a perfect example of Test Time Scaling” while noting that “inference requires significant numbers of NVIDIA GPUs and high-performance networking”. The statement attempted to redirect attention toward inference costs, where Nvidia’s hardware advantage remains more defensible, rather than training costs, where DeepSeek had demonstrated dramatic efficiency.

Some analysts expressed skepticism that American enterprises would adopt DeepSeek directly. Dan Ives of Wedbush Securities argued “No U.S. Global 2000 is going to use a Chinese startup DeepSeek to launch their AI infrastructure and use cases”. The assessment reflected concerns about data sovereignty, regulatory compliance, and geopolitical risk, but missed a crucial point: the value of DeepSeek’s release was not primarily in the service itself but in the methodology and open-source weights that others could adapt.

Practical Adoption: From Azure to Meta

Within days of R1’s release, practical adoption began across the industry. Microsoft made DeepSeek R1 available through Azure, its cloud computing platform. This integration allowed Azure customers to access R1’s capabilities through standard API endpoints, effectively commoditizing reasoning model access and putting competitive pressure on pricing for similar capabilities from OpenAI and Anthropic.

Meta Platforms announced it was examining DeepSeek’s technical innovations to determine what could be applied to its own AI development efforts. Given Meta’s substantial investment in open-source AI through its Llama model family, the company’s interest in DeepSeek’s reinforcement learning methodology represented validation from one of the few organizations with the resources and expertise to evaluate the technical claims independently.

Within the research community, DeepSeek R1 became one of the most popular models on Hugging Face, the primary platform for sharing and distributing AI models. Downloads exceeded 10 million within the first months. Researchers at leading universities began incorporating R1 into their work, both as a research subject for understanding emergent reasoning capabilities and as a practical tool for AI-assisted research.

The pricing impact was immediate and substantial. DeepSeek’s API charged $8 per million tokens for both input and output, compared to OpenAI’s o1 pricing of $15 per million input tokens and $60 per million output tokens. This represented an approximately 85 percent cost reduction for comparable reasoning capabilities. Other providers adjusted their pricing structures in response.

The Geopolitical Dimension: Export Controls and Strategic Competition

DeepSeek’s achievement carried particular significance because it was accomplished under U.S. export restrictions specifically designed to prevent Chinese AI companies from accessing the most advanced hardware. The restrictions, imposed during the Biden administration and tightened multiple times between 2022 and 2024, limited China’s ability to purchase Nvidia’s most powerful AI chips and access advanced cloud computing resources.

Liang Wenfeng, DeepSeek’s founder, had purchased a stockpile of Nvidia A100 chips before export restrictions made such purchases impossible. The A100, while powerful, represented older technology compared to the H100 and H200 chips that American AI labs were using for frontier model development. DeepSeek’s work with H800 chips, a modified version of the H100 created specifically for the Chinese market with reduced capabilities to comply with export restrictions, demonstrated that hardware limitations could be partially compensated through algorithmic innovation.

The final Biden administration export control measures, announced in the administration’s closing days, further restricted China’s ability to access AI chips through resellers and remote data center access. These restrictions aimed to prevent workarounds that had allowed Chinese organizations to access more advanced hardware than U.S. policy intended to permit. However, DeepSeek’s demonstration that frontier capabilities could be achieved with restricted hardware raised questions about whether export controls could effectively slow Chinese AI development or merely forced Chinese researchers to develop more efficient methods.

President Trump’s announcement of the Stargate AI project, a consortium involving SoftBank, Oracle, OpenAI, and UAE-based MGX planning to invest $100 billion immediately in U.S. AI infrastructure, represented a direct competitive response. The scale of investment reflected a determination to maintain American leadership through resource commitment, but the announcement came against the backdrop of DeepSeek’s demonstration that such leadership might not be solely a function of capital investment.

Technical Verification: Peer Review and Replication

One element that distinguished DeepSeek R1 from typical AI model releases was the level of technical transparency provided. The research paper included detailed descriptions of the training methodology, the reinforcement learning reward structure, the architectural choices, and the specific benchmarks used for evaluation. This transparency allowed independent verification in ways that proprietary model announcements do not.

By September 2025, DeepSeek R1 became the first peer-reviewed large language model, with its methodology and results validated through academic review processes. The peer review addressed initial skepticism about whether the reported results could be replicated and whether the claimed costs were representative of the true development investment.

Independent laboratories with access to comparable hardware began attempting to replicate DeepSeek’s methodology. These replication efforts provided increasing confidence that the core claims about reinforcement learning-driven reasoning development were valid, even as debates continued about the total costs involved in reaching the point where the methodology could succeed.

Carnegie Mellon University researchers described the training process as analogous to a child learning through video games, constantly discovering through trial and error which actions yield rewards. This framing helped explain how the model could develop sophisticated reasoning strategies without explicit instruction, a result that had seemed implausible to many researchers before seeing the detailed methodology.

The May 2025 Update: Continued Development

DeepSeek released an upgraded version, DeepSeek-R1-0528, on May 28, 2025. The update introduced several key improvements including enhanced benchmark performance across reasoning and factual tasks, reduced hallucinations, support for JSON output and function calling, and improved interaction quality. Notably, the update maintained backward compatibility with existing API endpoints, allowing developers to benefit from improvements without code changes.

Performance improvements in the update were substantial. Benchmark scores increased 5 to 30 percentage points across different tasks compared to the January release. The model’s reasoning depth effectively doubled, using significantly more internal tokens to work through complex problems before producing final answers. This demonstrated that DeepSeek was continuing active development rather than treating R1 as a one-time release.

One year after the initial R1 release, in January 2026, DeepSeek remained the most popular open-source reasoning model on Hugging Face. The model had catalyzed what observers described as a second layer of Chinese innovation with rapid iterations, distillation into smaller models, and integration into industrial applications. Rumors circulated about a forthcoming V4 model that would further advance capabilities in long-context reasoning and code generation.

Economic Implications: The Commoditization of Intelligence

The broader economic implication of DeepSeek R1 extends beyond any specific model or company. By demonstrating that frontier reasoning capabilities can be developed at a fraction of historical costs and then distributed freely under permissive licenses, DeepSeek accelerated what economists might call the commoditization of artificial intelligence.

In markets where products become commoditized, prices fall toward marginal cost and profit margins compress. Companies can no longer sustain premium pricing based on exclusive access to scarce capabilities. Competition shifts toward differentiation through integration, user experience, specialized applications, or other factors that cannot be easily replicated.

The AI industry through 2024 had operated on the assumption that frontier model capabilities would remain scarce, concentrated in a small number of organizations with the resources to train at scale. This scarcity supported premium pricing for API access and created the potential for sustained high margins. DeepSeek challenged this assumption by showing that the marginal cost of training frontier models might be orders of magnitude lower than total R&D investment, and that the R&D itself could be accomplished with far less capital than the industry leaders were deploying.

The response from major AI laboratories reflected recognition of this shift. OpenAI’s development of GPT-5, released in August 2025, incorporated efficiency lessons from DeepSeek’s work, including dynamic compute allocation that adjusts how much thinking time is devoted to each query based on complexity. This represented a strategic pivot from build the biggest model toward build the most efficient model for each use case.

Smaller companies and enterprises that had been priced out of using frontier AI capabilities found that DeepSeek’s distilled models provided accessible alternatives. Amazon and Salesforce integrated DeepSeek-derived reasoning capabilities into their platforms without the prohibitive API costs that had previously made such integration economically questionable.

The Infrastructure Paradox: More Efficiency, More Compute

A counterintuitive possibility emerged from the DeepSeek release: that making AI more efficient might actually increase total compute demand rather than decrease it. This phenomenon, known as Jevons paradox in economics, occurs when efficiency improvements lower the cost of using a resource so much that total consumption increases.

If reasoning capabilities can be delivered at 15 percent of previous costs, applications that were economically marginal become viable. Use cases that required careful rationing of expensive API calls become feasible for continuous deployment. The total number of AI queries across all applications could increase faster than the per-query cost decreases, resulting in higher aggregate demand for computing infrastructure.

Nvidia’s own response to DeepSeek implicitly acknowledged this possibility. The company’s statement emphasized that inference requires significant numbers of NVIDIA GPUs and high-performance networking. While DeepSeek had demonstrated efficiency in training, the company would still need substantial infrastructure to serve the model to users at scale. Nvidia reported achieving 36 times higher throughput on DeepSeek R1 between January and March 2025 through combined hardware and software optimizations, translating to approximately 32 times improvement in cost per token, according to Nvidia Technical Blog.

The infrastructure question remained unresolved as of early 2026. Some analysts predicted that efficiency gains would indeed reduce infrastructure investment, putting pressure on Nvidia and data center operators. Others argued that the expansion in AI adoption enabled by lower costs would more than offset efficiency gains, sustaining infrastructure demand growth. The actual outcome would depend on price elasticity of demand for AI capabilities, a parameter the industry was still discovering.

Implications for Research and Development Strategy

For organizations developing AI capabilities, DeepSeek forced a reassessment of resource allocation between pre-training and post-training. The traditional model had concentrated investment in pre-training: assembling massive datasets, purchasing or building large compute clusters, and running extended training jobs to produce base models. Post-training, the process of fine-tuning and aligning models after pre-training, received comparatively less investment.

DeepSeek demonstrated that post-training techniques, particularly reinforcement learning, could produce capabilities that matched or exceeded what other organizations achieved through much larger pre-training investments. This suggested that the marginal return on pre-training expenditure might be declining while the marginal return on post-training research remained high.

Several research groups began openly publishing post-training techniques that meaningfully improved open-source base models. The commoditization of base models, accelerated by DeepSeek’s releases, redirected competitive attention toward the layer where base models are adapted, specialized, and made useful for specific purposes. This represented a more distributed, more accessible form of competition than the capital-intensive race to build the largest pre-training runs.

For organizations without the resources to train frontier models from scratch, the implications were encouraging. Access to capable base models through open-source releases meant that competitive advantage could be built through domain expertise, specialized data, and superior post-training rather than through sheer capital expenditure. For the largest AI laboratories, the implication was more sobering: that the economic moat around frontier capabilities was narrower than previously believed.

Conclusion: The New AI Landscape

DeepSeek R1 did not end American leadership in artificial intelligence. The major U.S. laboratories retained advantages in talent, capital, established user bases, and integration with cloud platforms. What DeepSeek did accomplish was demonstrating that leadership in AI is not solely a function of resource commitment, that the barriers to achieving frontier capabilities are lower than widely assumed, and that open-source development can operate on timelines competitive with proprietary efforts.

The market reaction to DeepSeek R1, while extreme in magnitude, reflected genuine uncertainty about the economic foundations of the AI industry. If training costs are dramatically lower than reported, if reasoning capabilities can be developed through algorithmic innovation rather than brute-force scale, and if the results can be freely distributed to anyone who wants them, then the entire investment thesis supporting hundreds of billions in AI infrastructure spending requires reevaluation.

As of early 2026, the industry was still processing these implications. Some predictions from late January 2025 had proven overblown. Nvidia’s stock recovered partially from its initial crash, though not to previous highs. American AI laboratories continued receiving massive funding. The death of the AI infrastructure complex proclaimed by some observers had not materialized.

But the landscape had changed in ways that would persist. Expectations about the capital required to compete in AI development had adjusted downward. The viability of open-source development as a competitive strategy had been validated at the frontier of capabilities. The assumption that Chinese AI development would lag American efforts by years due to export restrictions had been directly challenged.

Marc Andreessen’s characterization of DeepSeek R1 as AI’s “Sputnik moment” captured something important about the psychological impact. Like the 1957 Soviet satellite launch that shocked Americans who had assumed technological superiority, DeepSeek demonstrated that competition in artificial intelligence would come from directions and through methods that the established leaders had not fully anticipated. The industry that existed before January 20, 2025 and the industry that existed after were operating under fundamentally different assumptions about what is required to achieve frontier AI capabilities and who can realistically compete to build them.