© 2026 NervNow™. All rights reserved.

Trump Bans Anthropic; OpenAI Wins Federal Deal Hours Later

The US government banned Anthropic for refusing to enable mass surveillance and autonomous weapons. Here is how it happened in 24 hours.

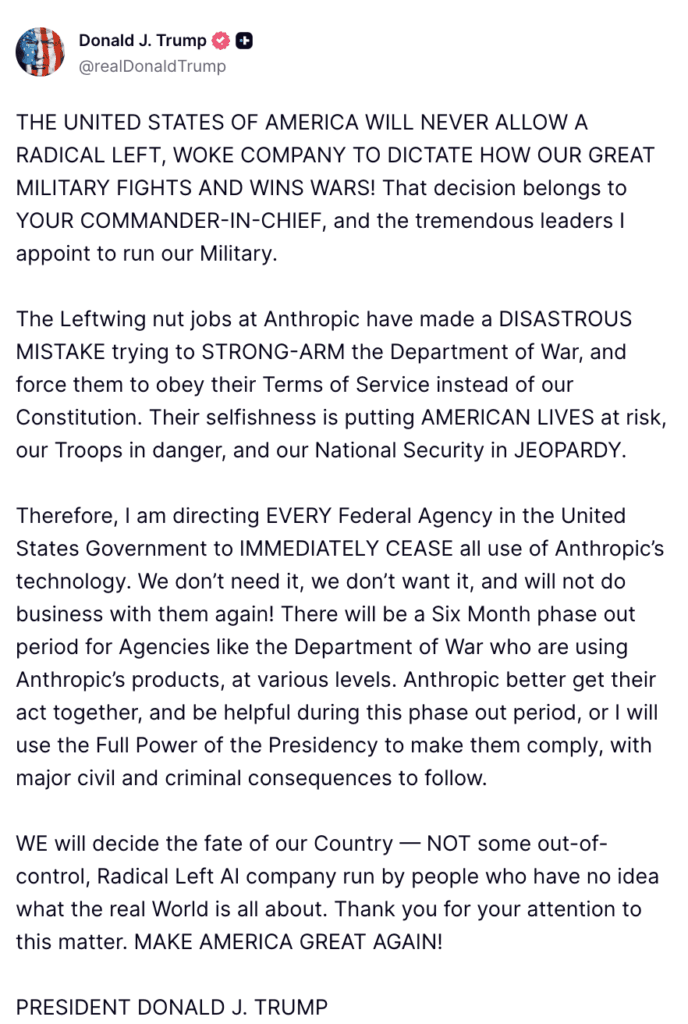

A week of escalating confrontation between Anthropic and the Pentagon ended Friday with President Trump ordering every federal agency to stop using Claude, Defense Secretary Pete Hegseth applying a designation historically reserved for foreign adversaries, and rival OpenAI announcing it had reached an agreement with the Defense Department on the same terms Anthropic refused to drop.

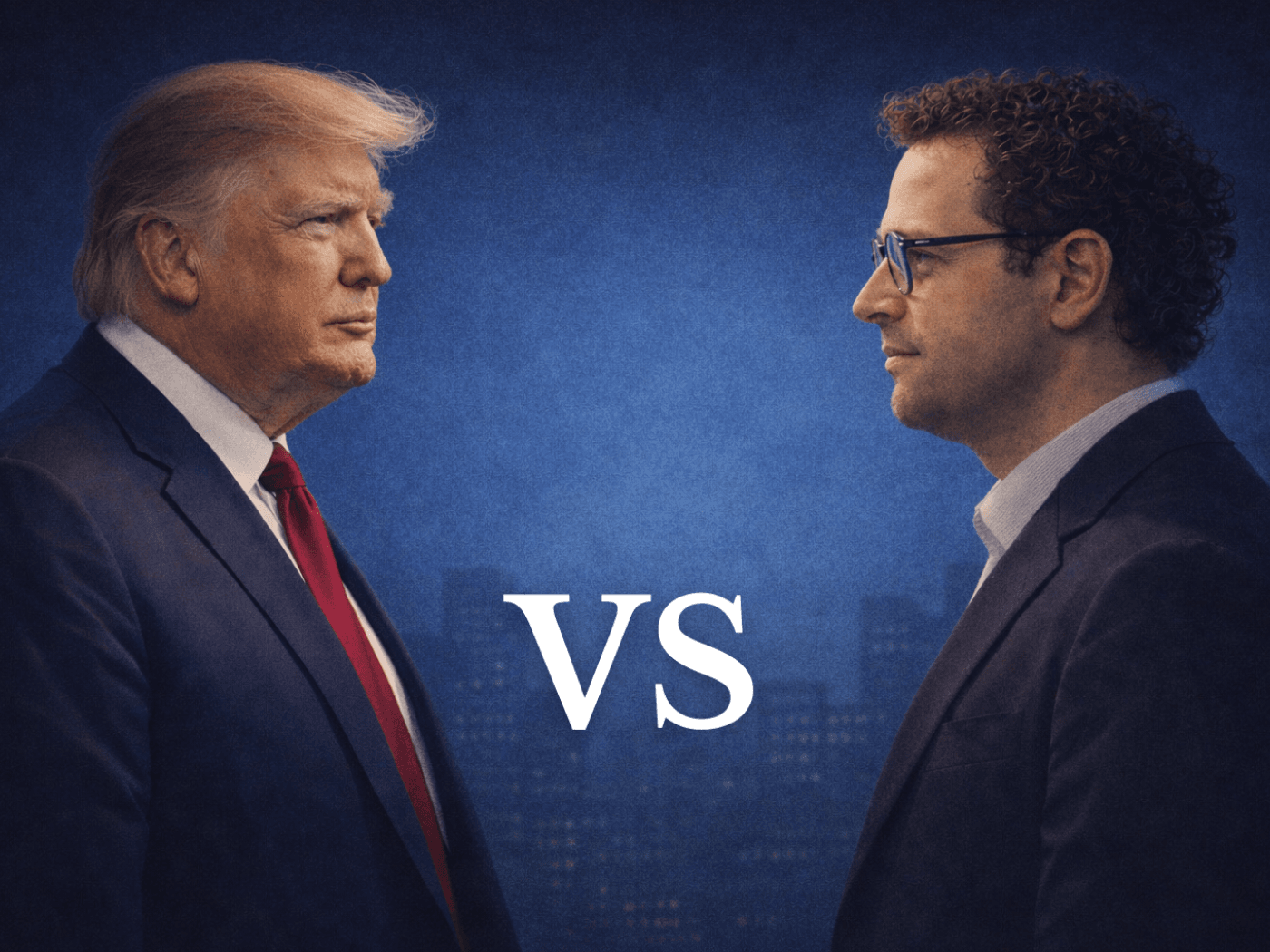

President Donald Trump on Friday ordered all U.S. federal agencies to immediately stop using technology from artificial intelligence company Anthropic, capping a weeklong standoff between the Pentagon and the San Francisco-based AI startup over safeguards on its Claude models. The order came roughly one hour before a Pentagon deadline for Anthropic to drop its restrictions or face consequences, a deadline that passed without an agreement.

In a post on Truth Social, Trump directed every federal agency to “immediately cease” all use of Anthropic’s technology, describing the company’s refusal to comply with Pentagon demands as a “disastrous mistake.” Most agencies were ordered to cut ties immediately. The Defense Department, which has Anthropic’s Claude embedded across several classified military platforms under a $200 million contract signed in July 2025, was given a six-month phase-out period.

Defense Secretary Pete Hegseth followed Trump’s post by announcing on X that he was directing the Pentagon to designate Anthropic a “Supply-Chain Risk to National Security,” a label that has historically been applied to companies considered extensions of foreign adversaries, and that has never before been publicly applied to an American company. Under Hegseth’s directive, no contractor, supplier, or partner that does business with the U.S. military may conduct any commercial activity with Anthropic, effective immediately.

Anthropic said in a statement Friday evening that it had not yet received direct communication from the Pentagon or the White House on the status of negotiations when the designations were announced. The company told it would challenge any supply chain risk designation in court, calling it “legally unsound” and “a dangerous precedent for any American company that negotiates with the government.” Anthropic also contested the scope of Hegseth’s directive, arguing that the Defense Secretary does not have the statutory authority to bar military contractors from doing business with Anthropic beyond the Pentagon’s own contracts.

ALSO READ: Pentagon Questions Anthropic on Claude’s Military Use

At the center of the standoff were two restrictions Anthropic had maintained since the beginning of its Pentagon relationship: that Claude would not be used for mass domestic surveillance of Americans, and that it would not be used to power fully autonomous weapons systems, those that remove human judgment entirely from targeting and engagement decisions. Anthropic said both uses were either incompatible with democratic values or beyond what today’s frontier AI models can reliably support.

The Pentagon’s position was that it required AI systems to be available for “all lawful purposes,” and that it had no intention of deploying Claude in the ways Anthropic feared. Military officials maintained that Anthropic’s insistence on contractual guardrails amounted to a private company attempting to override operational military judgment. Pentagon spokesperson Sean Parnell wrote on X that the government would “not let ANY company dictate the terms regarding how we make operational decisions.”

The confrontation became public Thursday when Anthropic CEO Dario Amodei published a statement saying the company “cannot in good conscience accede” to the Pentagon’s demands, even after receiving revised contract language it said made “virtually no progress” on the two disputed issues. Amodei said new language framed as a compromise was paired with provisions that would allow the safeguards to be disregarded at will. He said threats from the Pentagon, including the supply chain risk designation and the potential invocation of the Cold War-era Defense Production Act, would not change Anthropic’s position.

Amodei and Hegseth met in person at the Pentagon on Tuesday. A source familiar with the meeting described it as cordial. The situation deteriorated in the days that followed.

OpenAI moves in the same night

Hours after Trump’s order, OpenAI CEO Sam Altman announced on X that his company had reached an agreement with the Defense Department to deploy its models on the Pentagon’s classified networks. Altman said the agreement included the same safety principles Anthropic had sought. “Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems,” Altman wrote. “The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement.”

Altman added that OpenAI was asking the Defense Department to offer these same terms to all AI companies. OpenAI’s existing Pentagon contract, signed in 2025 for $200 million, had been for non-classified use cases including everyday office tasks. The new agreement extends OpenAI’s presence into classified military networks, the territory previously held by Anthropic.

ALSO READ

OpenAI Secures $110B From Amazon, Nvidia, SoftBank; Valuation Soars to $730B

Altman vs Amodei: AI Rivals Refuse to Hold Hands at India AI Summit

Earlier on Friday, before the ban was announced, Altman told CNBC he trusted Anthropic as a company and believed they genuinely cared about safety. In a memo to OpenAI employees on Thursday, Altman had said OpenAI shared the same red lines as Anthropic on autonomous weapons and mass surveillance, according to reporting by Axios. The sequence of events, supporting Anthropic’s position publicly on Thursday, then filling the contract gap on Friday night, drew immediate attention from industry observers.

“Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles.”

Sam Altman, CEO, OpenAI · X, Feb. 27, 2026

Industry and congressional reaction

The AI industry moved largely in support of Anthropic throughout Friday. An open letter circulated among tech and AI leaders opposing the Pentagon’s approach, signed by 11 OpenAI employees including researchers Boaz Barak and William Feng, as well as executives from other firms. The letter said the federal government “should not retaliate against a private company for declining to accept changes to a contract.” More than 100 Google employees had separately written to the company’s chief scientist Jeff Dean earlier in the week, requesting similar restrictions on how Google’s Gemini models are used by the military. Employees at Microsoft and Amazon also reportedly pressed their management to adopt comparable limits.

Elon Musk took the opposite position, posting on X that “Anthropic hates Western Civilization.” Musk’s own AI chatbot, Grok, is reported to be in line to receive access to classified military networks, a development that analysts noted may benefit directly from Anthropic’s removal.

Congressional reaction was largely critical of the administration’s handling of the dispute. Sen. Mark Warner of Virginia, the ranking member of the Senate Intelligence Committee, said Trump’s directive “combined with inflammatory rhetoric attacking that company, raises serious concerns about whether national security decisions are being driven by careful analysis or political considerations.” Sens. Ed Markey of Massachusetts and Chris Van Hollen of Maryland wrote to Hegseth calling the Pentagon’s threats “a chilling abuse of government power.”

Retired Air Force Gen. Jack Shanahan, a former leader of the Defense Department’s AI initiatives, wrote on social media that Claude is already widely used across classified government settings, and that Anthropic’s red lines are “reasonable.” He said the AI models that power chatbots like Claude are “not ready for prime time in national security settings,” specifically for fully autonomous weapons. “They’re not trying to play cute here,” he wrote on LinkedIn Thursday.

What comes next

Anthropic faces a six-month wind-down of its Pentagon relationship, during which it has said it will work to ensure a smooth transition to another provider without disruption to ongoing military operations. The company said it remains ready to resume discussions if the Defense Department is willing to accept the two safeguards. That outcome appears unlikely under the current administration.

The supply chain risk designation is subject to legal challenge. Anthropic has argued that under federal law, the designation applies only to Claude’s use in Pentagon contracts; it cannot extend to how contractors use Claude to serve other customers. If that interpretation holds, the practical reach of Hegseth’s directive may be more limited than its language suggests.

The broader question hanging over the AI industry is whether the standoff sets a precedent. Several major AI companies, Google, Microsoft, and Amazon among them, also hold Pentagon contracts. OpenAI’s move to fill the gap Anthropic leaves, while publicly endorsing the same safety principles, will be closely watched as an indicator of how those relationships develop. The Defense Department has hinted that it will require AI contractors to accept unrestricted lawful use as a baseline condition going forward.

For Anthropic, the immediate financial exposure from losing the $200 million Pentagon contract is manageable; the company is valued at more than $60 billion and is in the midst of significant commercial growth. The supply chain risk designation, if it survives legal challenge, carries more serious implications: it would bar any military contractor from doing business with the company, potentially affecting partnerships across a wide range of enterprise and government adjacent work.

Disclaimer: This article is based on reporting by CNBC, CBS News, CNN, NBC News, NPR, Fortune, Bloomberg, The Washington Post, Federal News Network, and Axios. All quotes are drawn from public statements, verified social media posts, and on-record reporting from the above outlets. NervNow has not independently verified internal government communications or unattributed sourcing cited by third parties.