© 2026 NervNow™. All rights reserved.

How to Evaluate AI Vendor Claims: A Technical Guide for CTOs and AI Leaders

What an AI vendor mean when he say hallucination-free, enterprise-grade, and 95% accurate. How to evaluate AI vendor claims: A technical guide for CTOs and AI leaders

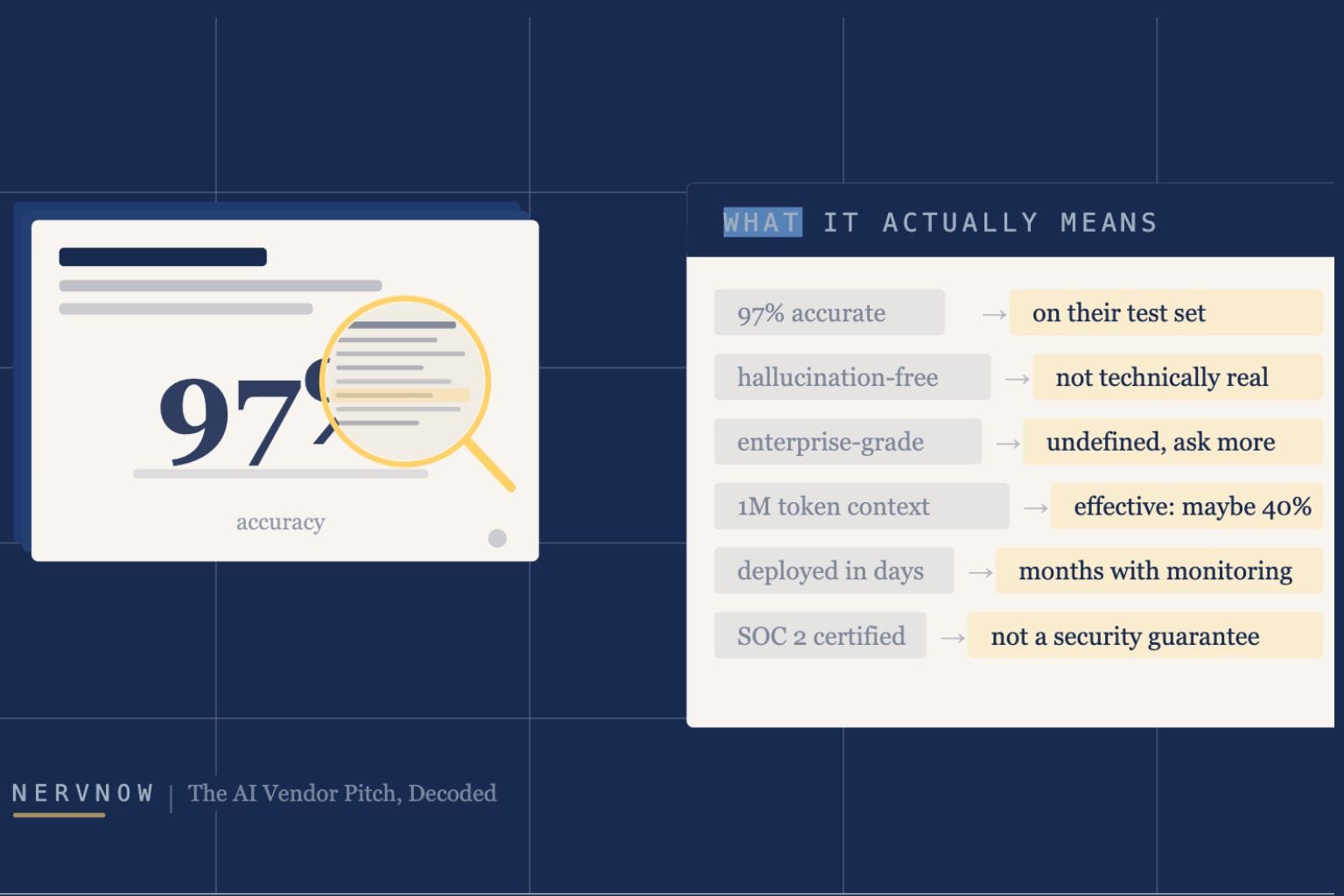

The AI Vendor Pitch, Decoded

Accuracy rates, context windows, enterprise-grade, hallucination-free: a technical evaluator’s guide to the language vendors use, what it obscures, and what to ask instead.

Every AI vendor pitch follows a similar arc. There is a slide with a large percentage. Another with a benchmark comparison. A phrase like enterprise-grade appears at least twice. The word hallucination is used, usually to say the product does not have one.

The problem is that these claims are technically accurate and practically meaningless unless you already know what questions to ask. This piece is a translation guide for people who evaluate, procure, or build on top of AI systems. Six claim categories. For each: what the vendor means, what the claim actually hides, and what a sharp evaluator asks in response.

1. Benchmark Claims

Benchmarks are the most widely cited and least useful numbers in AI procurement. MMLU (Massive Multitask Language Understanding) tests a model on multiple-choice questions across 57 academic subjects. HumanEval tests code generation on a set of Python programming problems. These are standardized, reproducible, and almost entirely disconnected from what your system will actually do.

There are three specific problems with benchmark claims. First, benchmarks measure the wrong thing. A score on academic multiple-choice questions tells you nothing about whether the model handles your domain’s edge cases, follows your output format requirements, or maintains coherence across a long conversation. The gap between benchmark performance and task performance can be enormous.

Second, benchmark contamination is a real and underreported problem. Because benchmark datasets are public, models can be trained intentionally or accidentally on data that overlaps with the test set. A model that has seen the answers during training will score higher without being more capable, and there is no independent auditing process that makes this visible.

Third, benchmark scores are sensitive to evaluation method. The same model, on the same benchmark, can score materially differently depending on whether chain-of-thought prompting is used, whether few-shot examples are provided, or how the output is parsed. Vendors naturally report the configuration that produces the best result.

Which benchmark, on which split, with which prompting method?

Can you run an evaluation on a sample of our actual data before we proceed?

What does performance look like on tasks that resemble our specific use case?

The only benchmark that matters for your decision is one built from your own representative data. Everything else is a proxy.

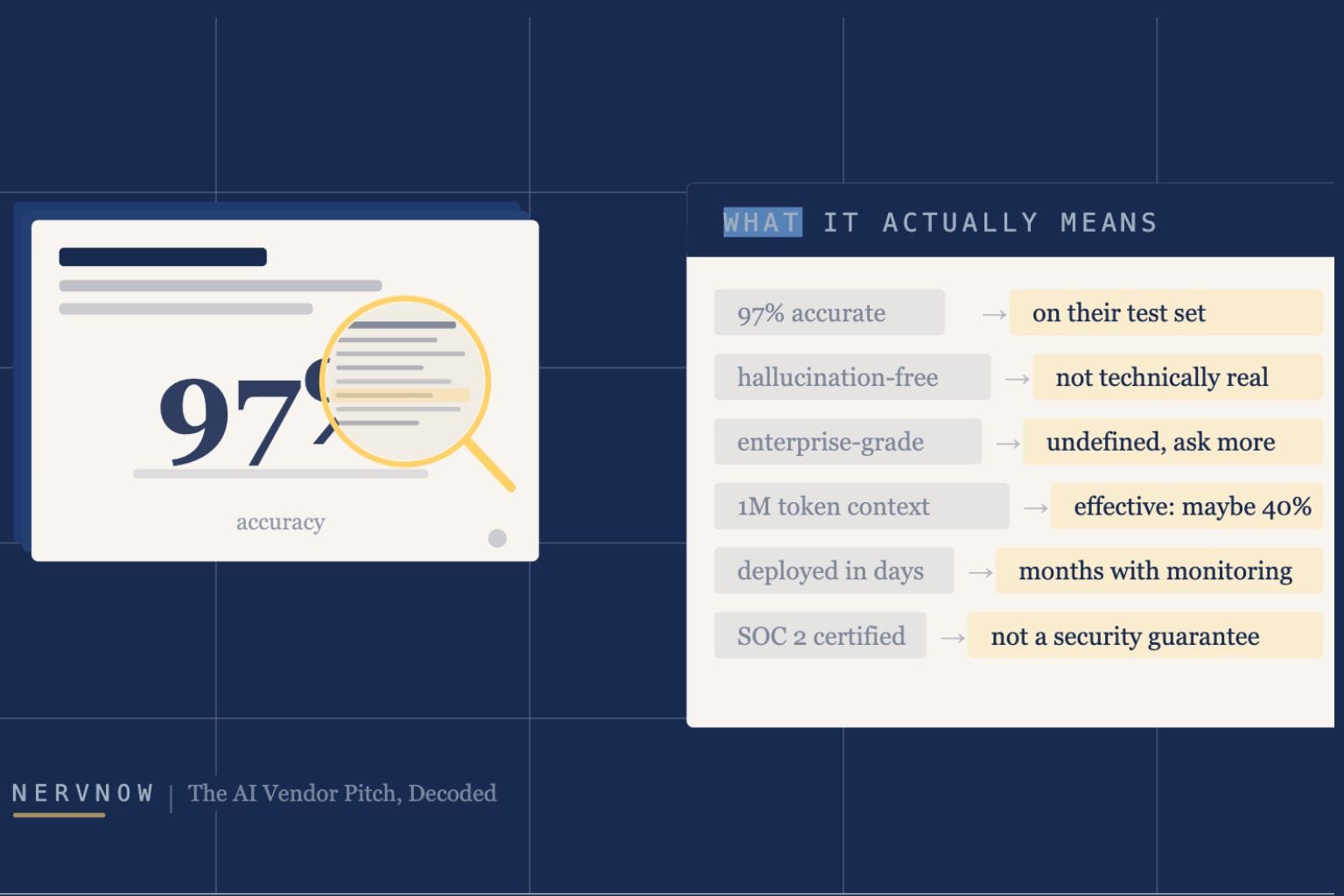

2. Accuracy and Hallucination Claims

These are the most consequential claims in any AI pitch, and the most frequently misrepresented. Consider them separately.

Accuracy

Accuracy is a property of a model evaluated on a specific task, using a specific metric, on a specific dataset. When a vendor says 95% accurate, the immediate questions are: accurate at what, measured how, on whose data, compared to what baseline? A retrieval system might be 95% accurate at returning a relevant document, and that says nothing about whether the model generates a correct answer from that document. A classification model might be 95% accurate on a balanced test set but perform at 60% on the class distribution your production traffic actually produces.

Hallucination-free

Hallucination-free is not a technically coherent claim for any general-purpose language model currently available. Hallucination, where a model generates plausible-sounding but factually incorrect or fabricated content, is a structural property of how these models work. A model that generates text by predicting likely continuations will occasionally predict wrong continuations confidently.

What RAG (Retrieval-Augmented Generation) does is reduce the scope for hallucination by grounding the model’s response in retrieved documents. It does not eliminate hallucination. A model can still misread, misrepresent, or confabulate details from a document that is directly in front of it. Grounded means the system attempts to use a reference source; it does not guarantee the output faithfully represents that source.

How do you define a hallucination in your evaluation? What is the measurement methodology?

What is the hallucination rate on out-of-distribution or low-confidence queries?

What happens when the retrieved document does not contain the answer?

3. Context Window Claims

Context window size has become a marketing metric. The progression from 4K to 8K to 128K to 1M tokens has been rapid, and vendors have been eager to lead with it. The number is real. What it implies about utility is a different matter.

There is a well-documented phenomenon called the lost-in-the-middle problem. Models perform significantly worse at retrieving or reasoning about information positioned in the middle of a long context compared to information at the beginning or end. A model with a 1 million token window can technically ingest 1 million tokens. Whether it pays equal attention to all of it is a separate question, and the honest answer for current models is that it does not.

The second issue is cost and latency. Processing a 1 million token context is not equivalent in cost to processing a 10,000 token context. Compute scales with context length, often superlinearly depending on the architecture. Vendors quote context window size as a capability; they rarely volunteer the per-query cost at that context length or the latency implications.

What is your retrieval accuracy on a needle-in-a-haystack test at 200K tokens? At 500K?

What is the per-query cost and p95 latency at our expected context length?

What context length would you recommend for a production deployment, given quality and cost tradeoffs?

4. Speed and Latency Claims

Latency benchmarks are among the most misleading numbers in AI procurement because they are almost never collected under conditions that resemble your production environment.

There are two distinct latency metrics that vendors frequently conflate. Time to first token (TTFT) measures how long before the model begins generating output. Throughput measures how many tokens per second the model generates once it starts. For interactive applications like chatbots, copilots, and voice interfaces, TTFT is what the user experiences as lag. For batch processing pipelines, throughput matters more. A vendor quoting 200 tokens per second may be quoting throughput on a single unloaded request, and under concurrent load across shared infrastructure, both numbers change significantly.

Shared infrastructure is another variable that rarely appears in vendor pitch materials. Most API-based AI services run on shared compute, which means your query competes with other tenants’ queries for GPU time. Latency under real conditions can be highly variable, and p95 latency, which represents what your slowest 5% of users experience, can be multiples of the quoted average.

What are your p50 and p95 TTFT numbers under concurrent production load?

Is this on dedicated or shared infrastructure, and what SLA do you offer on latency?

How does throughput degrade at 10x our expected query volume?

5. Enterprise-Grade Claims

Enterprise-grade is a positioning statement, not a technical specification. Behind it are several distinct claims that need to be examined individually.

What SOC 2 actually certifies

SOC 2 Type II is a widely cited compliance credential that audits a company’s security controls across five trust service criteria: security, availability, processing integrity, confidentiality, and privacy. The audit covers whether the controls a company describes are actually in place and operating effectively over a review period, typically six to twelve months.

What SOC 2 does not certify is the strength of those controls, whether they are appropriate for your risk tolerance, or whether a breach cannot occur. It certifies that described controls exist and function as described. Two companies can both hold SOC 2 certifications with substantially different security postures.

Data training claims

Your data will not be used for training is a contractual commitment, not a technical one. For it to be meaningful in practice, the infrastructure must have logging, access controls, and data pipeline separations that technically prevent query data from entering training pipelines. Ask for the technical architecture, not the contractual language. The contract states what they have promised. The architecture determines whether the promise is enforceable.

SLA language

Uptime SLAs in AI vendor contracts frequently include exemptions that substantially erode the guarantee. Scheduled maintenance, force majeure clauses, degraded performance that falls short of complete outage, and the definition of availability versus correct output availability are all common vectors. Read what the SLA excludes before relying on the headline percentage.

Where is our data physically processed? Is India-region processing available?

Walk me through the technical architecture that enforces the no-training commitment.

What are the SLA exclusions, and how is a credit calculated when the SLA is breached?

What is your breach notification timeline and process?

6. Integration and Deployment Claims

Integration timelines are the most consistently optimistic claims in AI vendor pitches, and they are almost always wrong in the same direction.

What vendors call integration is typically API connectivity, meaning a call to their endpoint returns a response. A production deployment involves substantially more: authentication and access management, data pipeline construction to move your data into the right format at the right time, prompt engineering to make the model behave consistently on your inputs, evaluation infrastructure to measure whether it is working, monitoring to detect when it stops working, and a fallback plan for when it does.

There is also the maintenance cost, which vendors never lead with. AI systems degrade over time in ways that traditional software does not. User behavior shifts. The underlying model gets updated. Prompt drift, where a prompt that worked reliably begins producing inconsistent outputs as the model changes, is a real operational problem that requires active monitoring to catch.

Walk me through a typical enterprise deployment at 30 days, 90 days, and 6 months. What broke and how was it fixed?

What monitoring do you provide out of the box, and what do we need to build ourselves?

What happens to our deployment when you update the underlying model?

What is the rollback procedure if output quality degrades post-deployment?

A Reusable Evaluation Posture

The six sections above cover specific claim categories. There is also a more general posture that cuts through almost any AI vendor claim without requiring category-specific knowledge. It comes down to three questions.

Measured how, by whom, on what data?

Every performance claim is a measurement. A measurement without a methodology is a marketing statement. Ask for the evaluation design: what task, what dataset, what metric, conducted by whom. If the vendor measured it themselves, on data they selected, using a metric they defined, weight the result accordingly.

What does this number not tell me?

Every headline metric hides a distribution. A 95% accuracy rate carries a 5% failure rate, and what matters is what those failures look like and when they occur. Ask what the failure mode is, not just the success rate. The shape of failure is usually more operationally important than the headline number.

What happens when it fails?

Reliability and recoverability are underweighted in AI vendor evaluations. Every system fails; what distinguishes production-grade systems is how failure is detected, how quickly it is surfaced, and what the recovery path looks like. A vendor who has not thought through their failure modes in detail is telling you something important about their operational maturity.

These three questions do not require deep technical expertise to ask. They require a willingness to slow down a pitch and demand specificity. Vendors with genuinely robust systems will answer them readily. Those whose claims are primarily marketing will not.

The best AI procurement decisions are made by the organizations that ask precise questions and insist on precise answers.

NervNow covers AI strategy, infrastructure, and policy for senior technology and business decision-makers. nervnow.com

Disclaimer: The vendor claims cited in this piece are representative of patterns commonly observed across the AI industry. No specific company or product is referenced. Readers are encouraged to conduct independent due diligence before making procurement decisions.

ALSO READ:

Why Every AI Chatbot Seems to Give the Same Advice? The Artificial Hivemind Effect, Explained

Prompts, RAG, or Fine-Tuning? The AI Stack Decision Most Teams Get Wrong

LLM SEO: How to Get Your Brand Mentioned by AI?

Why Europe Leads AI Regulation, Lags in AI Power