© 2026 NervNow™. All rights reserved.

nEye.ai Raises $80M for AI Data Centers

Silicon Valley startup nEye.ai has secured $80M in Series C funding to accelerate its optical circuit switching chip, pushing total capital raised to $152M.

Silicon Valley startup nEye.ai has secured $80M in Series C funding to accelerate its optical circuit switching chip, pushing total capital raised to $152M.

Silicon Valley startup nEye.ai has officially announced an $80 million Series C funding round, bringing its total capital raised to an impressive $152 million. The round was led by Sutter Hill Ventures, with participation from CapitalG (Alphabet’s independent growth fund), M12 (Microsoft’s Venture Fund), Socratic Partners, and Micron Technology.

Rather than broadening its research scope, nEye.ai is directing the new capital toward one focused goal: scaling the high-volume manufacturing of its proprietary Optical Circuit Switches (OCS). Specifically, the company is transitioning from traditional mechanical assemblies to a foundry-compatible, wafer-scale production process, a move that significantly reduces per-unit costs at scale.

To fully understand the significance of this raise, it helps to first look at the problem nEye.ai is solving. Modern AI training clusters, often described as gigawatt-scale “AI factories” link together thousands of GPUs, CPUs, and memory units. The real bottleneck, however, is not always raw compute. Instead, it is the speed and efficiency with which data moves between those components.

Traditionally, this data movement has relied on electrical switches, which generate significant heat and consume enormous amounts of power. nEye.ai’s solution, by contrast, routes data using light rather than electricity, dramatically cutting both latency and power draw two constraints that hyperscale data centers can no longer afford to ignore.

What makes nEye.ai technically distinctive is its integration of three advanced technologies onto one chip: Silicon Photonics, MEMS (Micro-Electro-Mechanical Systems), and CMOS. This architecture known as “OCS-on-a-chip” delivers a much smaller physical footprint and substantially lower energy consumption compared to conventional switching systems.

Furthermore, the chip supports what the industry calls composable infrastructure: data centers can dynamically pool and reassign GPUs, CPUs, and memory resources in real time as AI workload demands shift. This flexibility, therefore, is increasingly seen as essential for next-generation AI model training and inference.

Optical switching is now a requirement to unlock the true potential of AI training and inference at scale.

James Luo, General Partner at CapitalG.

Meanwhile, Stefan Dyckerhoff, Managing Director at Sutter Hill Ventures, who has also joined nEye.ai’s board of directors, noted that the OCS-on-a-chip approach offers “a unique path to meeting the extreme density and power constraints of modern hyperscale data centers.” In terms of market size, the optical circuit switching sector is projected to surpass $3 billion within the next three years, driven by the accelerating compute demands of generative AI.

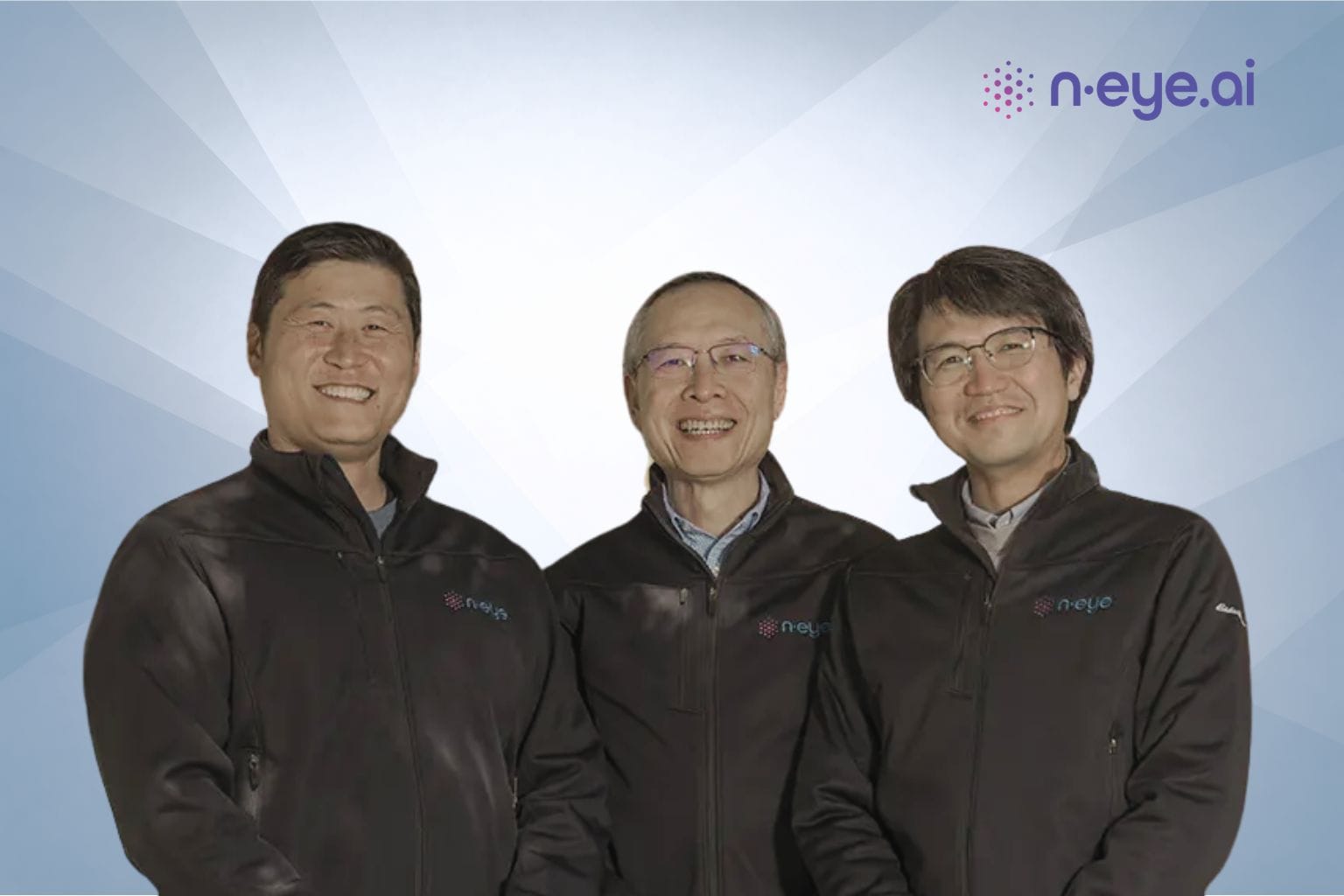

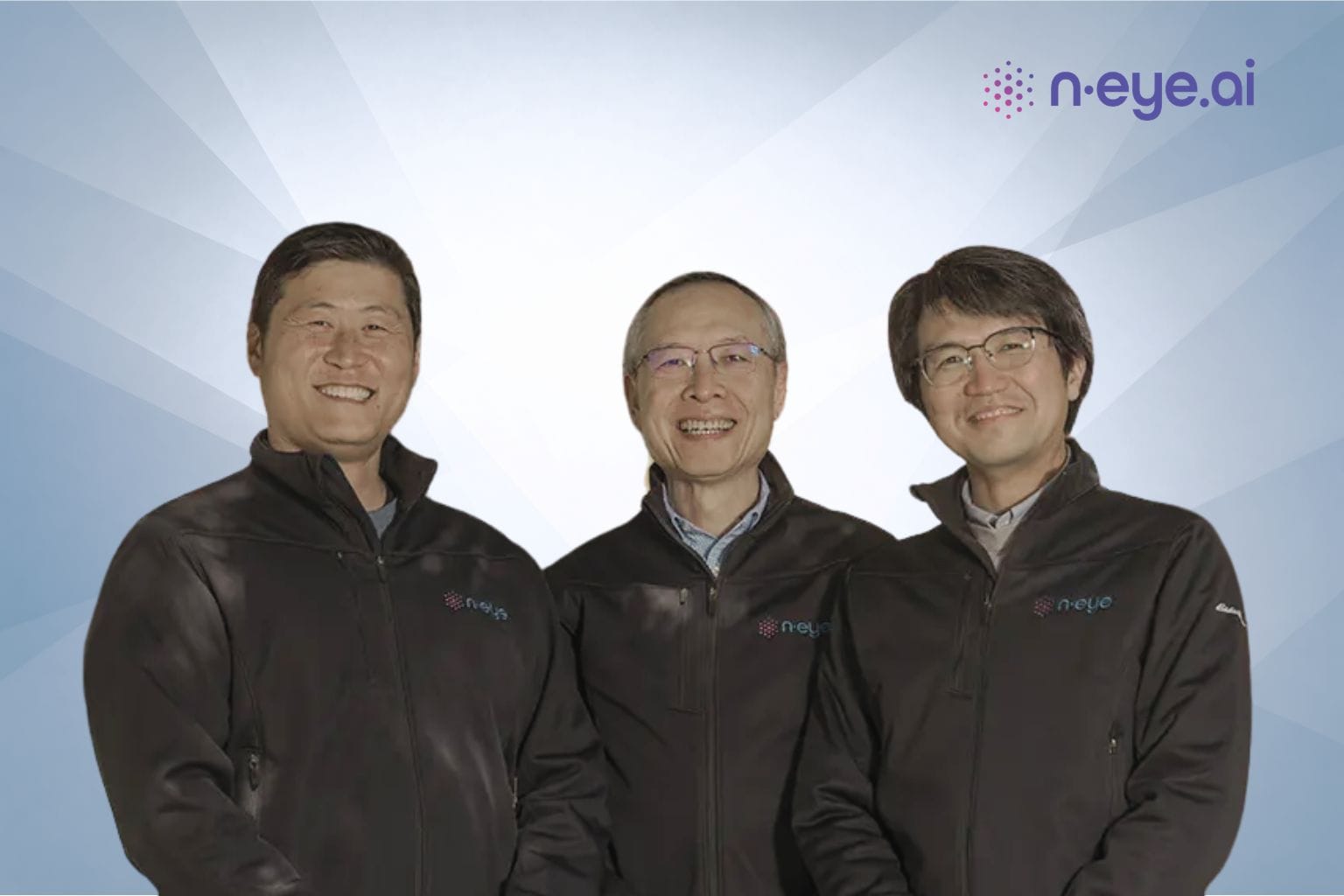

Founded in 2020 by Tae Joon Seok and Kyung (co-founders), nEye.ai is headquartered in Santa Clara, California. The company’s mission centres on dismantling the networking bottlenecks that limit sustainable AI infrastructure at scale.

Disclaimer : This news is based on publicly available information. NervNow has not verified the details independently.

RELATED NEWS

Flashpass Raises $4.25M Seed for GovTech Skills Platform

Smart Garage Raises Rs 2.4 Cr in Pre-Series A Round

Glydways Raises $170M to Expand Robocar Networks Globally

London Fintech Round Raises $6M Seed to Automate Finance Teams